This article points to a webinar given by Chris Jefferson (Advai CTO) and Nick Frost (CRMG Lead Consultant) in association with PECB, which is made available to the public here PECB - Managing ISO 31000 Framework in AI Systems - The EU ACT and other regulations

Risk Framework for AI Systems

Words by

Alex CarruthersAbout the Webinar

The management of AI systems is a shared responsibility. By implementing the ISO 31000 Framework and complying with emerging regulations like the EU ACT, we can jointly create a more reliable, secure, and trustworthy AI ecosystem.

Amongst others, the webinar covers:

- Understanding AI and the regulatory landscape

- AI and the threat landscape

- A risk driven approach to AI assurance - based on ISO 31000 principles

- Stress testing to evaluate risk exposure

Presenters:

Chris Jefferson

Chris is the Co-Founder and CTO at Advai. As the Co-Founder of Advai, Chris is working on the application of defensive techniques to help protect AI and Machine Learning applications from being exploited. This involves work in DevOps and MLOps to create robust and consistent products that support multiple platforms, such as cloud, local, and edge.

Nick Frost

Nick Frost is Co-founder and Lead Consultant at CRMG. Nick’s career in cyber security spanning nearly 20 years. Most recently Nick has held leadership roles at PwC as Group Head of Information Risk and at the Information Security Forum (ISF) as Principal Consultant. In particular Nick was Group Head of Information Risk for PwC designing and implementing best practice solutions that made good business sense that prioritized key risks to the organisation and helped minimize disruption to ongoing operations. Whilst at the ISF Nick led their information risk projects and delivered many of the consultancy engagements to help organisations implement leading thinking in information risk management.

Nicks combined experience as a cyber risk researcher and practitioner designing and implementing risk based solutions places him as a leading cyber risk expert. Prior to cyber security and after graduating from UCNW and Oxford Brookes Nick was a geophysicist in the Oil and Gas Industry.

Key Points of the Webinar

Navigating the AI Revolution: A Comprehensive Overview

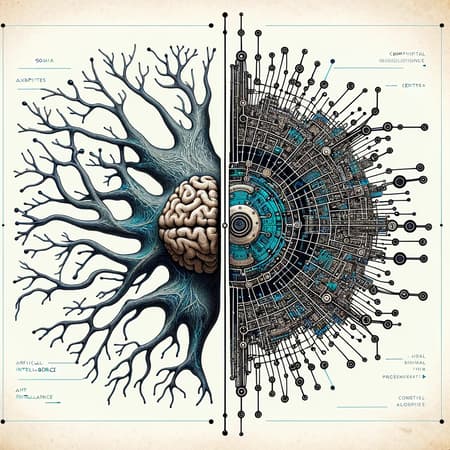

- The AI Phenomenon: 2023 marked a significant year in AI advancement, with notable progress from OpenAI and ChatGPT, and new government regulations. Businesses now see AI as both an enabler and a risk that needs careful management.

- Understanding AI and Regulatory Changes: AI's definition and its integration into various sectors are evolving. Staying abreast of imminent global regulations like the EU AI Act and the US AI Bill of Rights is crucial, as these will profoundly affect how AI is used and managed.

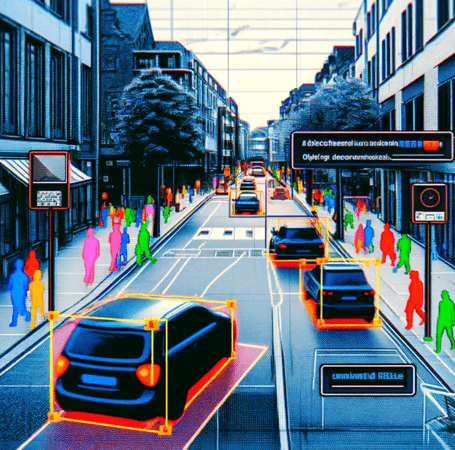

- AI and the Threat Landscape: AI introduces a unique threat landscape, including risks like data poisoning, overreliance, evasion attacks, and discrimination. Current frameworks often struggle to manage these challenges.

- A Risk-Driven Approach to AI Assurance: Based on ISO 31000 principles, a structured approach to AI risk management is recommended. This includes understanding AI's readiness within an organisation, leveraging existing risk architectures, and incorporating stress testing for AI systems.

- Stress Testing AI Systems: Rigorous stress testing of AI systems is essential to understand and mitigate risks. This involves assessing AI systems' performance, robustness, and reliability under various scenarios.

- Next Steps in AI Adoption: Practical steps for AI integration include understanding AI usage, determining risk awareness, leveraging existing risk architecture, undertaking risk assessments, and continuously monitoring AI risks.

By understanding the challenges and opportunities that AI presents, organisations can harness its potential safely and effectively.

Who are Advai?

Advai is a deep tech AI start-up based in the UK that has spent several years working with UK government and defence to understand and develop tooling for testing and validating AI in a manner that allows for KPIs to be derived throughout its lifecycle that allows data scientists, engineers, and decision makers to be able to quantify risks and deploy AI in a safe, responsible, and trustworthy manner.

If you would like to discuss this in more detail, please reach out to contact@advai.co.uk